Granular analysis of AI in design curriculum

Beyond Binary Thinking: A Granular Approach to AI’s Impact on Design Practice

Why sweeping generalisations about AI and the professions aren’t helping—and what we can do instead.

Design’s Exposure to AI Assesment Tool

Claude Opus 4.6 Marks its own homework

The Problem with Broad Assessments

Conversations about AI’s impact on professional practice tend toward the binary. Will AI replace designers? Yes or no? This framing feels urgent but produces little actionable insight. It forces us into camps—techno-optimists versus anxious resisters—while the actual transformation unfolds in ways neither position captures.

A blanket assessment of AI’s impact on “design” (or any profession) lacks the granularity needed for clear thinking. Design encompasses hundreds of distinct activities: conducting user interviews, synthesising research findings, sketching concepts, specifying materials, facilitating workshops, negotiating with stakeholders. These tasks vary enormously in their cognitive demands, their reliance on human presence, and their susceptibility to AI assistance.

Treating them as a monolithic whole produces sweeping generalisations that obscure more than they reveal.

The Case for Granularity

If we want to understand where AI will genuinely reshape professional practice, we need to decompose the work into its constituent parts and examine each on its own terms.

This isn’t about predicting specific AI capabilities—that’s a fool’s errand beyond short timeframes. It’s about creating a structured framework for thinking: What does a designer actually do? Which of those activities involve pattern recognition in structured data versus judgment in ambiguous situations? Which require physical presence or relational trust? Which are bottlenecked by human processing speed versus human creativity?

A granular approach encourages clearer, more honest assessment. Instead of asking “Will AI replace designers?” we can ask more useful questions: “Which specific tasks are likely to be transformed within the next one to three years? Where will the expertise threshold shift? What new capabilities will designers need to develop?”

Skill and Cognitive Offload

Here’s the insight that changes how we should think about this: full AI handoff is not necessary to fundamentally change professional practice.

The real concern is the point at which a human non-designer, working with AI tools under the direction of a manager, could replace a skilled design graduate. With the leverage of scale that AI provides, the economic pressure of that model could prove irresistible to organisations under cost pressure.

Even if only some elements of the role are displaced, the consequences compound. Fewer vacancies for junior designers means the career on-ramp is severely impacted. The traditional path—gaining experience through entry-level positions, learning from senior practitioners, gradually building expertise—narrows or disappears entirely.

This isn’t about AI autonomously replacing humans. It’s about AI compressing the experience curve, reducing the premium on hard-won expertise, and shifting what it means to be “qualified” for professional work. The questions this raises deserve more than hand-waving—they require systematic examination of where skill offload is already happening and where it’s likely to emerge.

A Growing Gulf in Understanding

There’s another dimension to this that concerns me: a widening gap between those who are actively engaging with AI tools and those who are not following developments closely.

For practitioners and educators immersed in this space, the pace of capability improvement is visceral. We see tools that couldn’t summarise a document coherently eighteen months ago now producing credible first drafts of complex analyses. We watch image generation evolve from curiosity to production tool. We experiment with AI-assisted coding, writing, and design workflows daily.

For colleagues whose attention is elsewhere—and there are many legitimate demands on everyone’s attention—perceptions of AI capability may lag reality by years. The gap between “what I tried once and found underwhelming” and “what’s possible today with current tools and techniques” is substantial and growing.

This asymmetry creates risk. Curriculum decisions, hiring practices, and professional development investments made on outdated assumptions may leave institutions and individuals poorly positioned for a transformed landscape.

Building the Framework

To move from abstract concern to structured analysis, I built an assessment tool grounded in design theory and common methods of practice.

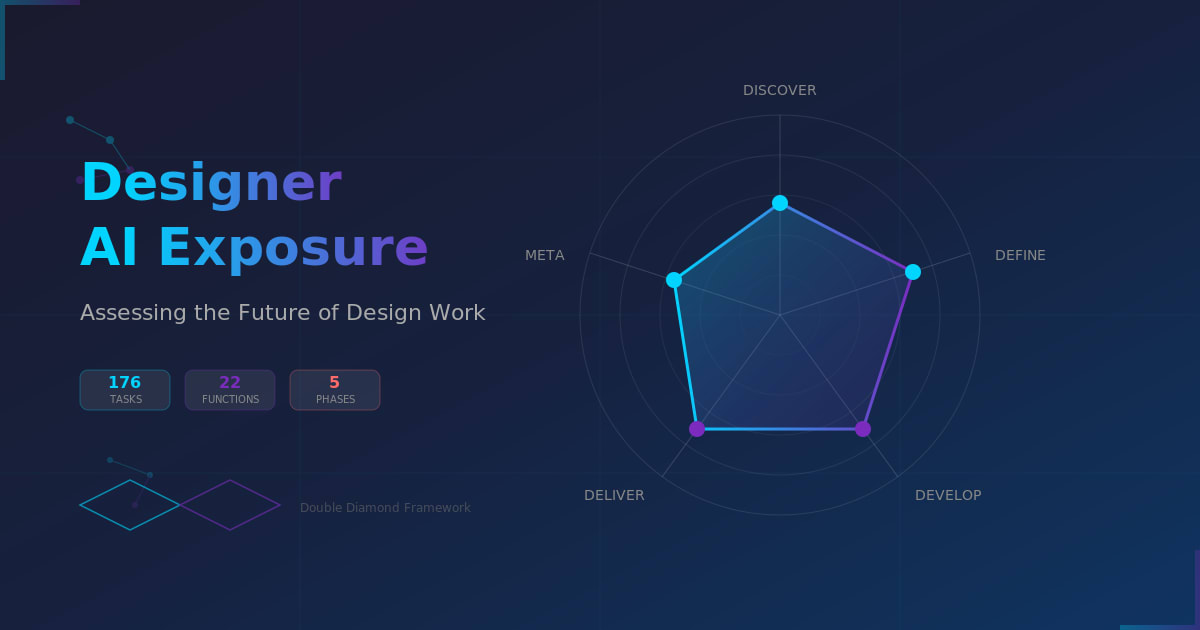

The structure draws on the Design Council’s Double Diamond—a widely recognised model of divergent and convergent phases—but goes further by decomposing each phase into distinct functions (roles designers play) and specific tasks (concrete activities).

To ensure the framework wasn’t merely a practitioner’s checklist, I grounded it in foundational design theory:

- Schön’s reflective practice and knowing-in-action

- Dorst and Cross’s research on problem-solution co-evolution

- Dorst’s frame innovation and abductive reasoning

- Rittel and Webber’s characterisation of wicked problems

- Kolko’s work on design synthesis

- Sanders and Stappers’ participatory and co-design perspectives

- Krippendorff’s semantic turn—design as meaning-making

- Norman’s human-centred design principles

This theoretical grounding matters because it surfaces activities that might otherwise be invisible: reflection-in-action, frame creation, navigating stakeholder value conflicts, managing the co-evolution of problem and solution understanding. These are precisely the kinds of activities where AI exposure is most contested.

The result is a framework of 22 functions and 176 individual tasks, organised across the four Double Diamond phases plus a cross-cutting “Reflective Practitioner” meta-function.

Critically, the framework is deliberately agnostic to specific design specialisation. Whether you practice industrial design, service design, UX, or communication design, the underlying cognitive activities—researching, synthesising, framing, ideating, prototyping, evaluating, specifying, communicating—remain recognisable. This generality makes the framework adaptable across contexts.

The Rating Scale

Each task can be rated on a timeline scale reflecting when AI is likely to enable significant skill offload:

| Rating | Label | Timeframe |

|---|---|---|

| 5 | Now | Already happening |

| 4 | Imminent | Within 12 months |

| 3 | Near-term | 1–3 years |

| 2 | Mid-term | 3–5 years |

| 1 | Long-term | 5–10 years |

| 0 | Distant / Never | Beyond prediction or irreducibly human |

A caveat accompanies the scale: predictions beyond five years are highly speculative. The tool is designed to prompt structured discussion, not to claim false precision about an uncertain future.

Using and Adapting the Tool

The interactive tool allows users to navigate through phases, expand functions to see their constituent tasks, rate each task, and export results (with optional name and comments) as CSV or JSON for further analysis.

Intended uses include:

- Curriculum review: Which capabilities are we teaching that face near-term transformation? Which emerging capabilities are we not addressing?

- Professional development: Where should practitioners focus skill-building efforts?

- Programme planning: How might learning outcomes shift over a five-year programmatic cycle?

- Cross-disciplinary comparison: How do patterns of AI exposure differ across professional fields?

The tool was built in approximately two hours using AI assistance. This matters: a similar tool could be constructed for other disciplines or specific aspects of a programme with relatively low effort. The framework’s value lies not in its specific content but in its approach—decomposing professional activity into assessable units and inviting systematic rather than impressionistic evaluation.

Adaptation might involve:

- Adjusting the task inventory for a specific design specialisation

- Building an equivalent framework for another profession (engineering, law, medicine, teaching)

- Focusing on a particular programme component (e.g., research methods, studio practice, professional skills)

- Comparing ratings across different respondent groups (students, educators, practitioners, employers)

An Invitation to Clearer Thinking

The goal of this work is not to produce definitive predictions—no one can reliably forecast AI’s trajectory. The goal is to replace vague anxiety or dismissive optimism with structured inquiry.

By breaking professional activity into its component parts, we can have more productive conversations: Which specific tasks do we believe are most exposed? Where do we disagree, and why? What assumptions about AI capability underlie our ratings? How should our findings inform curriculum, hiring, and professional development decisions?

These conversations are overdue. The transformation is already underway, unevenly distributed but accelerating. The question is whether we engage with it systematically or continue to trade generalisations while the ground shifts beneath us.

The Designer Functions AI Exposure Assessment tool and supporting documentation are available for use and adaptation. The framework is offered as a starting point for structured thinking—contributions, critiques, and adaptations are welcome.

Design’s Exposure to AI Assesment Tool

Claude Opus 4.6 Marks its own homework

References

- Buchanan, R. (1992). Wicked Problems in Design Thinking. Design Issues, 8(2), 5–21.

- Cross, N. (2011). Design Thinking: Understanding How Designers Think and Work. Berg.

- Design Council. (2019). Framework for Innovation: Design Council’s Evolved Double Diamond.

- Dorst, K. (2015). Frame Innovation: Create New Thinking by Design. MIT Press.

- Dorst, K., & Cross, N. (2001). Creativity in the Design Process: Co-evolution of Problem–Solution. Design Studies, 22(5), 425–437.

- Kolko, J. (2010). Abductive Thinking and Sensemaking: The Drivers of Design Synthesis. Design Issues, 26(1), 15–28.

- Krippendorff, K. (2006). The Semantic Turn: A New Foundation for Design. CRC Press.

- Norman, D. A. (2013). The Design of Everyday Things: Revised and Expanded Edition. Basic Books.

- Rittel, H. W. J., & Webber, M. M. (1973). Dilemmas in a General Theory of Planning. Policy Sciences, 4(2), 155–169.

- Sanders, E. B.-N., & Stappers, P. J. (2008). Co-creation and the New Landscapes of Design. CoDesign, 4(1), 5–18.

- Schön, D. A. (1983). The Reflective Practitioner: How Professionals Think in Action. Basic Books.

The Designer Functions AI Exposure Assessment tool and this accompanying blog post were co-produced with Claude Opus 4.5 (Anthropic). The framework development, tool construction, and writing process took approximately two hours of collaborative work.